What Are The Best Web Scraping Tools For Beginners?

If you’re reading this article, I’m going to assume you’re human. (Yeah, I’m a genius.) And if you’re human, that means you probably know how it feels to find out that some things are a lot easier than we make them out to be. When we get overwhelmed by an idea or opportunity, we tend to back away. While this isn’t necessarily a bad thing (not all ideas are good ideas), it can also block us from trying new things that make our lives better. Take web scraping, for instance. When I first learned about web scraping, I became very overwhelmed very quickly. I read articles that talked about the usefulness of a web scraping software for businesses and individuals, but I just had no idea where to start.

Table of Contents

So, what do you do if you’re like me? Even if you’ve heard about the benefits of using data, most of the web scraping reviews and product pages sound so complicated that they make it all seem unreachable. Finding a valuable web scraping tool can be tricky, but finding a tool that’s valuable and beginner-friendly can seem nearly impossible. Well, I hope this article debunks that myth. I’m not going to talk about complicated niche coding terms that scare people away. But still, I’ll be honest: This just isn’t something you learn overnight, and it’s going to take some time before you become a data scraping expert. But this article isn’t for experts. It’s for the ones who just need to start, and if you’re anything like me, that’s the most important step.

Why Should You Use Scraping Data Tools?

I could go on and on about this subject, but the truth is, there are way too many reasons to use free web scraping tools than I could talk about in this article. Even we are learning about new scraping use cases every day, based on the information and ideas brought to us from customers with a variety of skill sets, needs, and scraping capabilities.

One of the most popular reasons to scrape the web is to conduct product research, which can really help you gain the edge you need over your competitors. But it can also help individuals who are just looking to find the best prices on a product. If you can access large volumes of data very quickly, you can gain a better understanding of your market position and make more informed decisions about your future goals. For our purposes in this article, I’ll scrape product information about vinyl records on Amazon, which will hopefully show you the benefits and use cases for scraping for product information

Or maybe you want to improve your marketing and public relations efforts by scraping social media websites, gaining valuable information about your followers. You can use this information to understand what people are saying about your brand and why, giving you a stronger foundation to improve your engagement goals.

There are plenty of other reasons to use data scraping tools, so check out the rest of our blog for more ideas!

What Defines the Best Tools for Web Scraping?

If you want to see our thoughts about these products at a glance, check out the rating scale we include with each heading. This 1-10 scale takes the following things into consideration:

Cost

It’s no secret that this is one of the first (if not the first) factors people want to know about when choosing a product, and finding the best web scraper service is no different. However, since not all web scrapers are created equal, giving the best cost ratings to the cheapest products doesn’t really tell the whole story. So it’s important to consider the other factors on this list, too.

Ease of use

Ding, ding, ding, now this is what I’m talking about. If you’re like me (which, since you’re reading this article, I assume that you are), you don’t want to spend hella time figuring out how to use a tool. Especially when you’re not fully convinced about the importance of a data scraping software anyway. (Yet.)

Scraping Robot believes that scraping should be available to everyone, not just coding experts and developers. That’s why “Ease of use” is one of the first things I considered with each product in this article. Does the company offer tutorials? If so, what’s the content and quality of those tutorials and instructions? When you’re just getting started with web scraping, it can be hard to understand how the scraper works and what input it needs from you to do its job. If the site doesn’t use tutorials, that’s a big indicator that you’re probably going to have to put a lot of time and effort into just learning the product, and I don’t want to do that. So, no tutorials (or bad tutorials) = no points for this category. Sorry not sorry.

Javascript rendering

If your scraper can “render Javascript,” as they say, then your pool of websites just got a whole lot bigger (and more useful!) That’s because you can scrape dynamic sites, or sites that are programmed to change based on input from their users (e.g. Google Maps.) A lot of scrapers don’t use javascript because it’s so much more expensive to run on the hardware, and it can take forever to load and scrape multiple pages at a time with Javascript. But most modern websites have Javascript implemented at least in some form, so the ability to render that kind of code really increases the quality and quantity of data you can access with your scraper.

Desktop software

Some tools allow you to scrape the web using browser extensions or simple modules, but many scrapers also offer a desktop app option. Depending on the type of scraping you want to do, both tools are super helpful. However, if you’re going to use a desktop scraper, you want to make sure it supports a robust proxy management system. In layman’s terms, that means your scraper can handle high volumes of proxy requests without slowing down or getting banned.

What are the Best Tools for Scraping Data?

WebScraper.io

Rating: 7/10

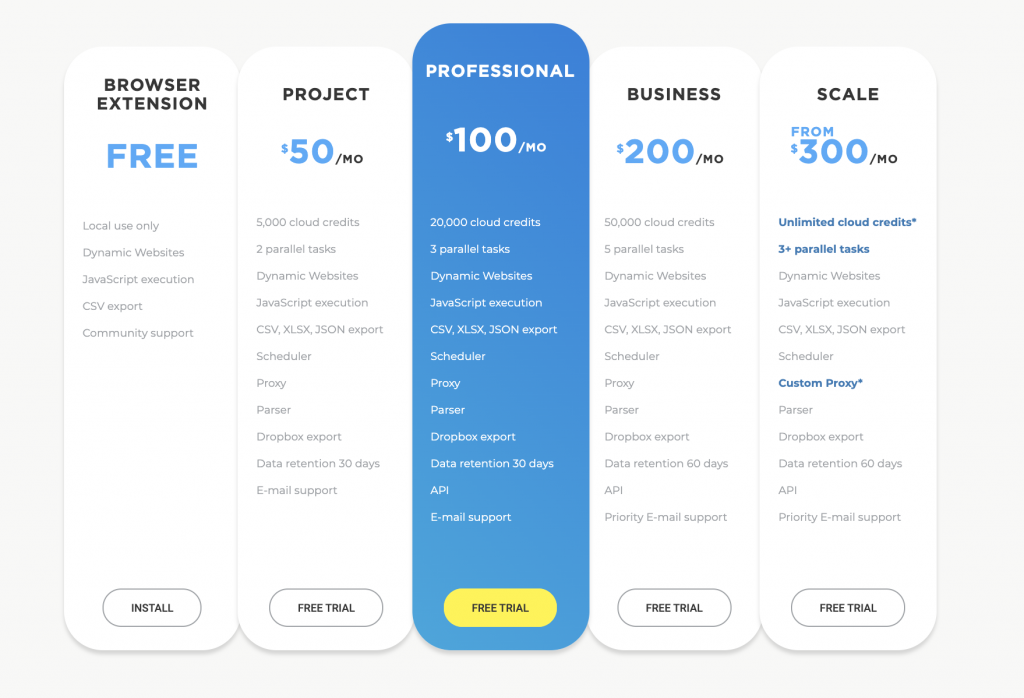

Cost The browser extension is free, but you can only use it locally. If you’re not looking to complete high volume scraping tasks, this extension is a great fit. However, you get a better reliability guarantee with monthly plans, starting at $50/month. However, the starting plan only gives you 5,000 scrapes, which is pretty expensive, especially if Scraping Robot can give you 5,000 scrapes for free every month. (I mean, come on.)

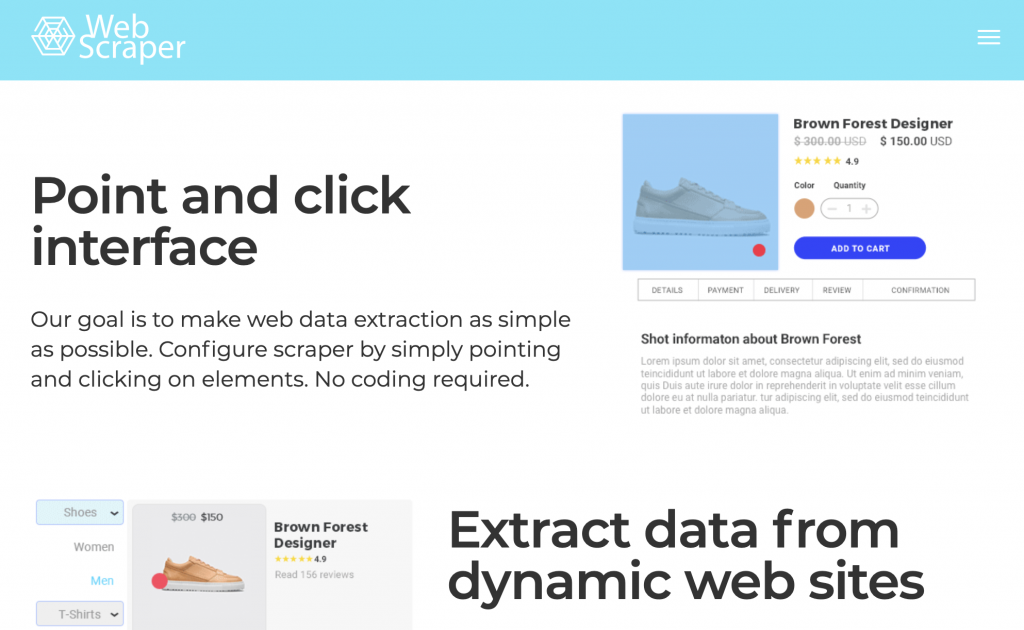

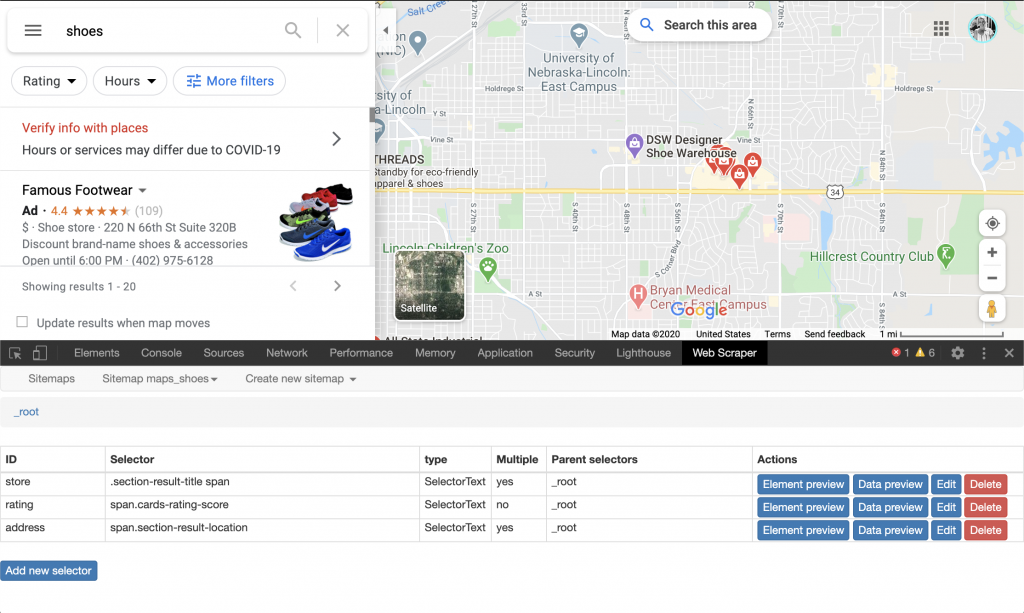

Ease of use For beginners, the browser extension (I used Chrome) definitely has a bit of a learning curve, but the tutorials and documentation really help speed up the process. It’s pretty easy to download the extension to your browser and start using it with any page.

I think point-and-click tools are great for visual learners, especially those who don’t want to touch code with a ten-foot pole.

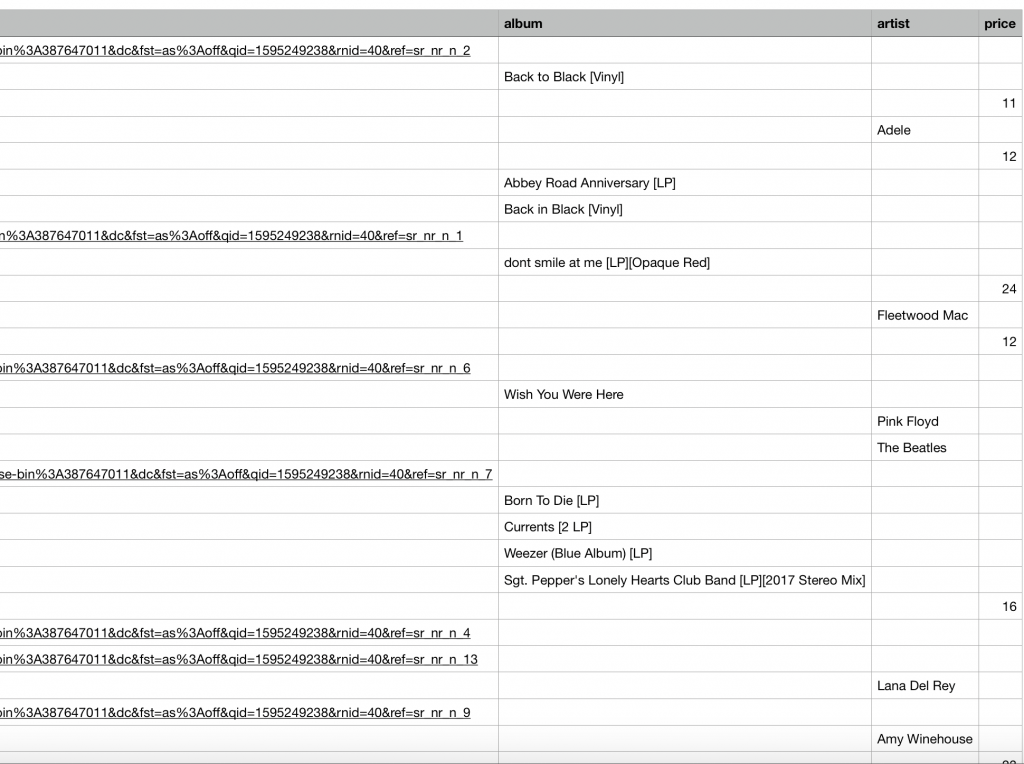

As you can see from this screenshot of my first attempt, there are many holes in my data (due to improper selecting.) But once I played around with the data a bit and saw how everything was connected, I got what I needed.

Javascript rendering Yep! You can scrape dynamic pages with this bad boy.

Desktop software WebScraper doesn’t offer a desktop version, but the cloud scraper still promises thousands of rotating IP addresses to ensure a robust proxy management system.

Conclusion If you’ve never scraped anything before, you’re going to have to familiarize yourself with terms and elements on a page, and play around with the way you want your sitemap to look. When I first started scraping, I had no idea what these things were, so I found myself a little confused by this tool. However, the screenshots show that I was still able to get (most of) the data I wanted.

Parsehub.com

Rating: 5/10

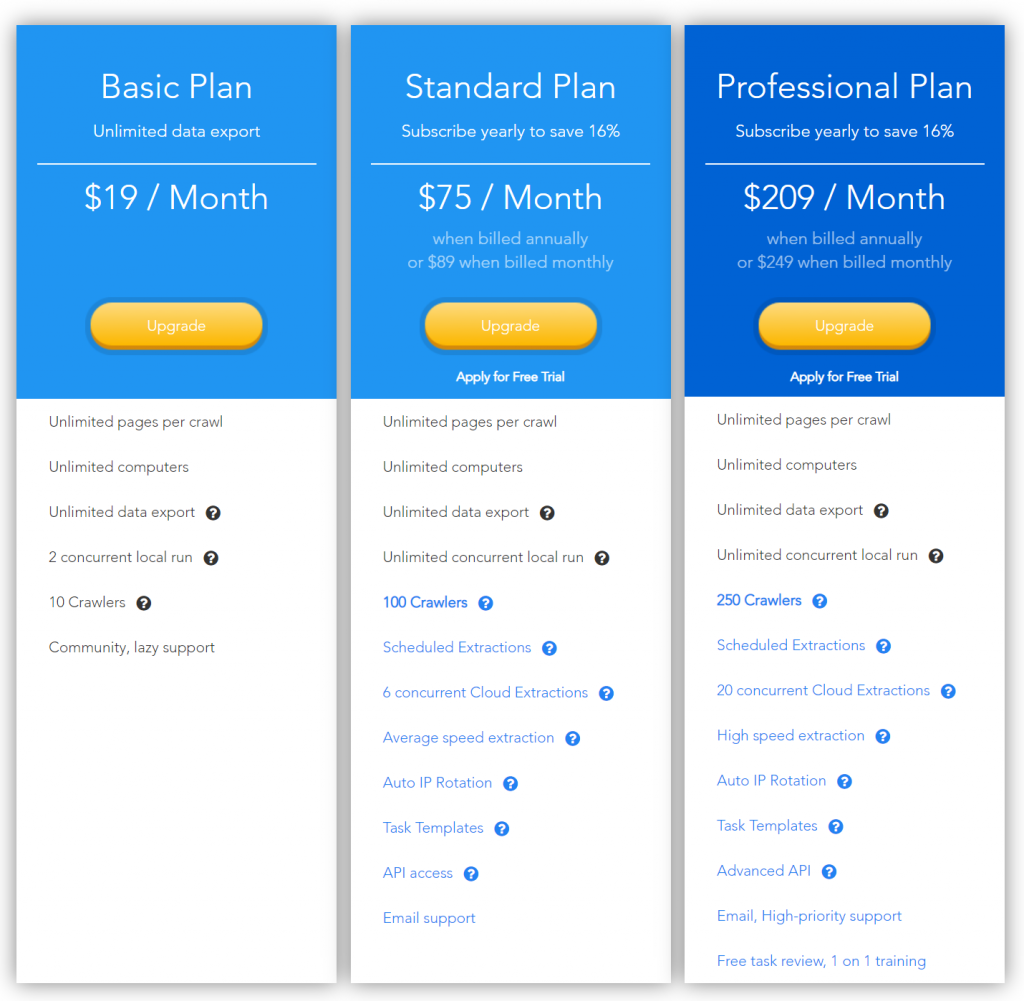

Cost The free plan can get you what you need, as long as that’s only 200 pages of data. (If you’re not interested in big scraping projects, maybe that works for you. But generally, this is a pretty meager offer.) The paid plans start at $149/month, which guarantee more scrapes and other benefits, including “standard support.”

Now, if you’re willing to pay $499/month for the professional plan, ParseHub will grant you “priority” status, but sheesh. Even if the ParseHub desktop app might be fairly easy to use (I say “might be” because there is still a steep learning curve for beginners), this lack of support for the free plan really turns me off. The quality of service you give your customers shouldn’t depend on how much they’re paying you. Boo, ParseHub.

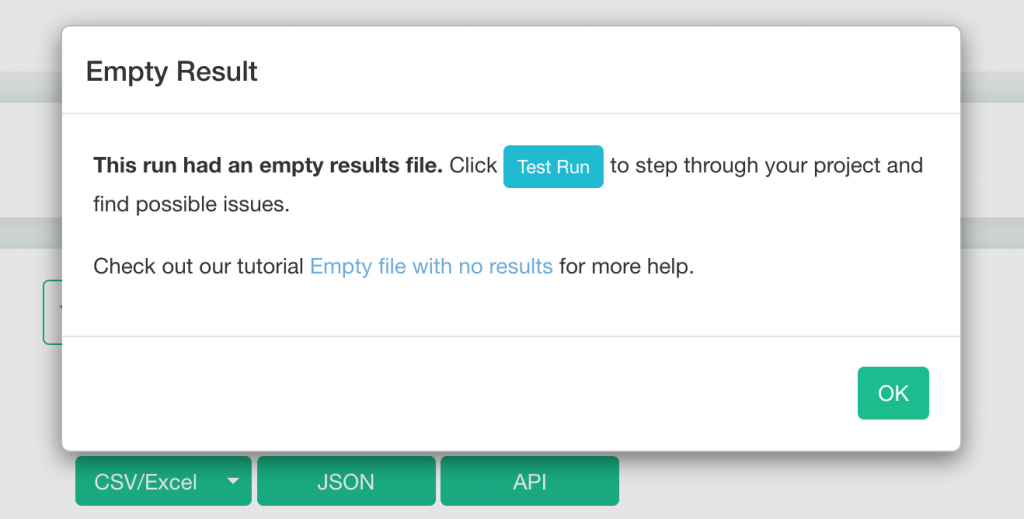

Ease of use Honestly, there are a lot of commands to get used to with the desktop app, but the tutorials are pretty helpful. If you’re a beginner looking for quick data extraction, it’s going to take you a hot second to really understand the program. When I walked through the beginning tutorial, it was easy to get the data I needed, but I suppose that’s because they were holding my hand through it all (lol.) When I set up my own sitemap to scrape Amazon vinyl prices and ran the project, I kept getting this message:

After reading the “Empty file with no results” tutorial article and running a test, it still wasn’t clear to me where the issue was with my feed. The test seemed to run smoothly, and I could clearly see the preview data on my screen. However, when I ran the actual scrape, I continued to get this message as a result. I sent a message to support through ParseHub’s nifty in-app chat button, and got this (pretty quick) response:

It is not really possible to scrape Amazon on our free plan due to their use of bot detection technology. Users on our paid plans (https://parsehub.com/pricing) can take advantage of our IP Rotation, and custom proxies features to have success scraping Amazon.

Let me know if you have any questions about this!

Cheers,

Ben

Boom goes the dynamite.

Javascript rendering The free version can’t scrape Amazon because it can’t bypass the bot detectors, but maybe it could scrape Google? You can see in the screenshot below that I could easily select the data I wanted to scrape.

However, when I ran the scraper. I got the same “empty results file” message. Now, Ben assured me that there are plenty of pages that can be scraped with the free ParseHub app, but I’m not going to waste my time figuring out what those sites are. I want to scrape Google Maps and Amazon, and the free scraper can’t do either one.

Desktop software ParseHub does include automatic IP rotation, which is good news for people who want to pay for the plans (or for those who only want to scrape sites without bot detection on the free plan.)

Conclusion Beginners, if you want to take try your luck and pay 149 bucks for the next plan, be my guest. I’m sure this tool is awesome for experts who need to scrape high volumes of data, but I’m not impressed by the lack of usability for beginners. The other tools in this article are far more versatile (and free), so they’re obviously going to be my first choice.

DataMiner.io Data Scraper

Rating: 7/10

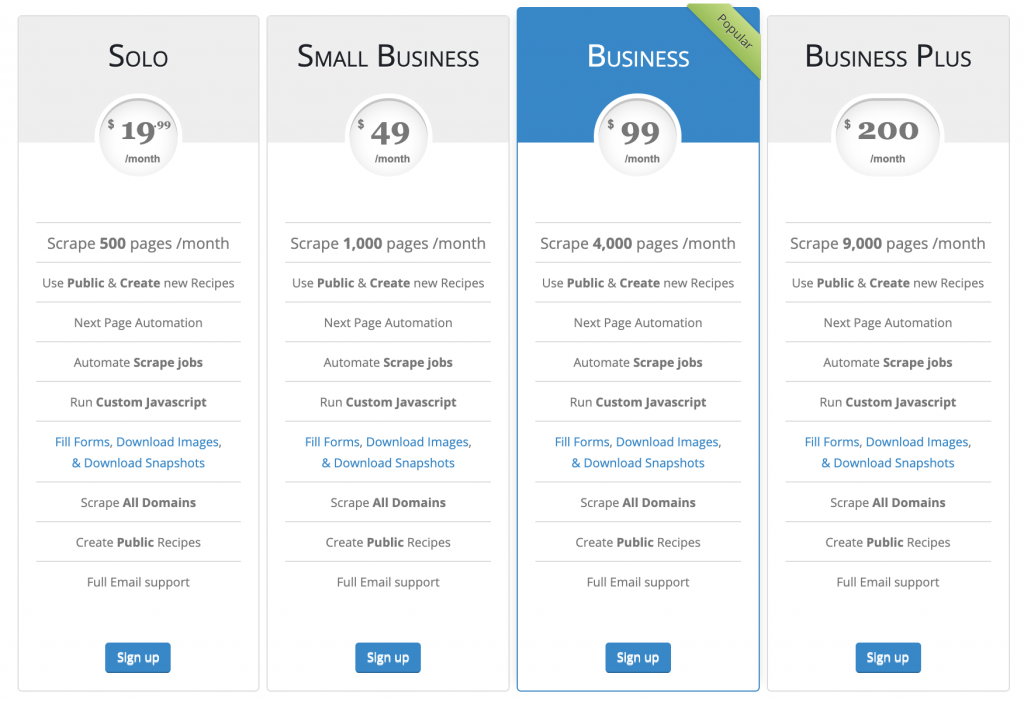

Cost With the free browser extension plan, you can scrape 500 pages each month and use more than 50,000 Data Miner recipes (scraping templates created by other users.) You can still create your own recipes and enable “next page automation” for your scrapes, but the free version won’t allow you to scrape all sites. The next plan starts at $19.99/month, which seems much more doable for beginners. However, it still only allows you to scrape 500 pages.

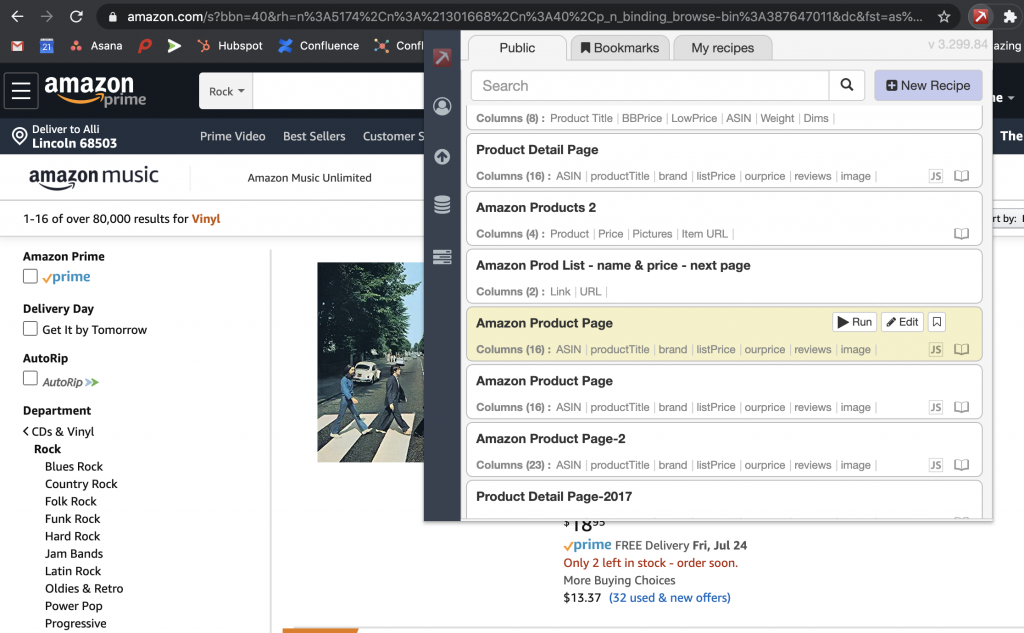

Ease of use I was really impressed by the usability of this browser extension, especially for beginners. The access to existing recipes means you can get your data instantly, as long as there’s already a template for the data you want to scrape.

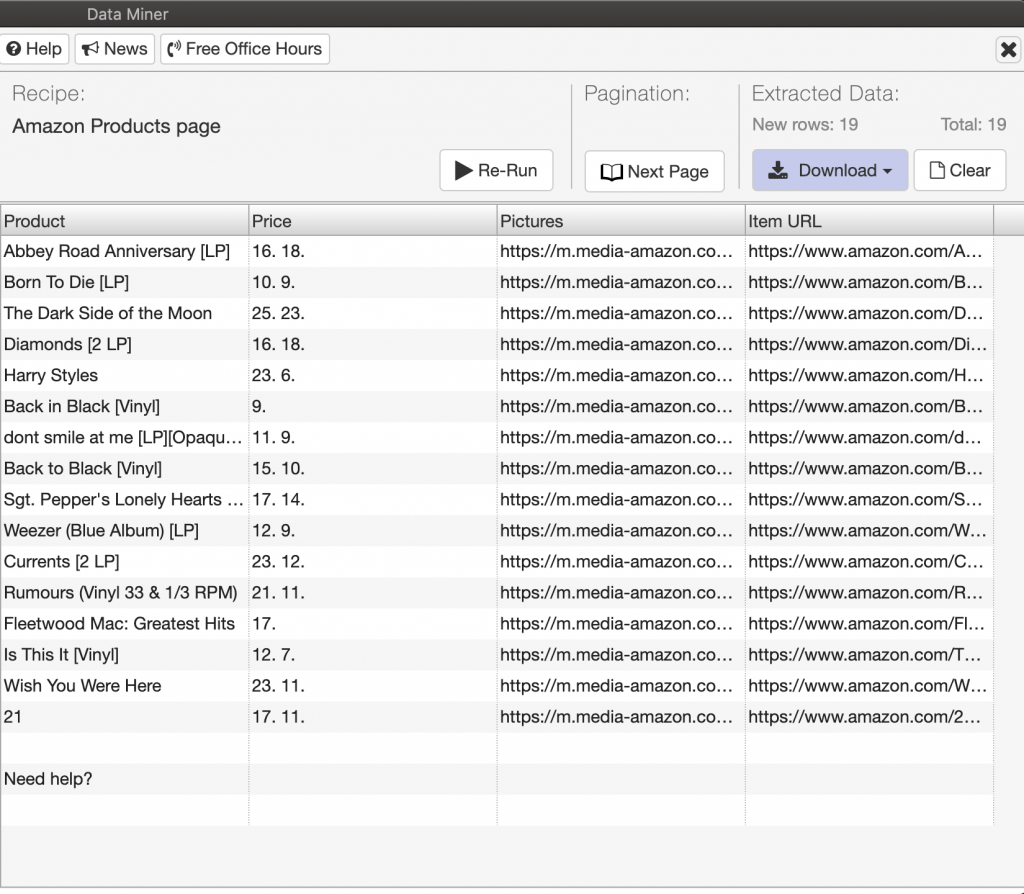

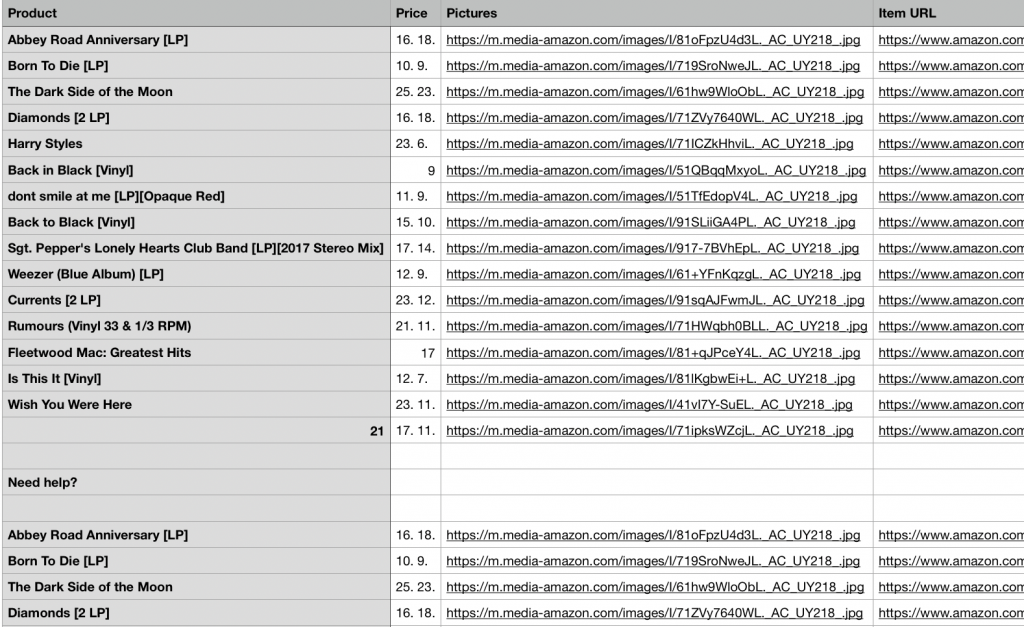

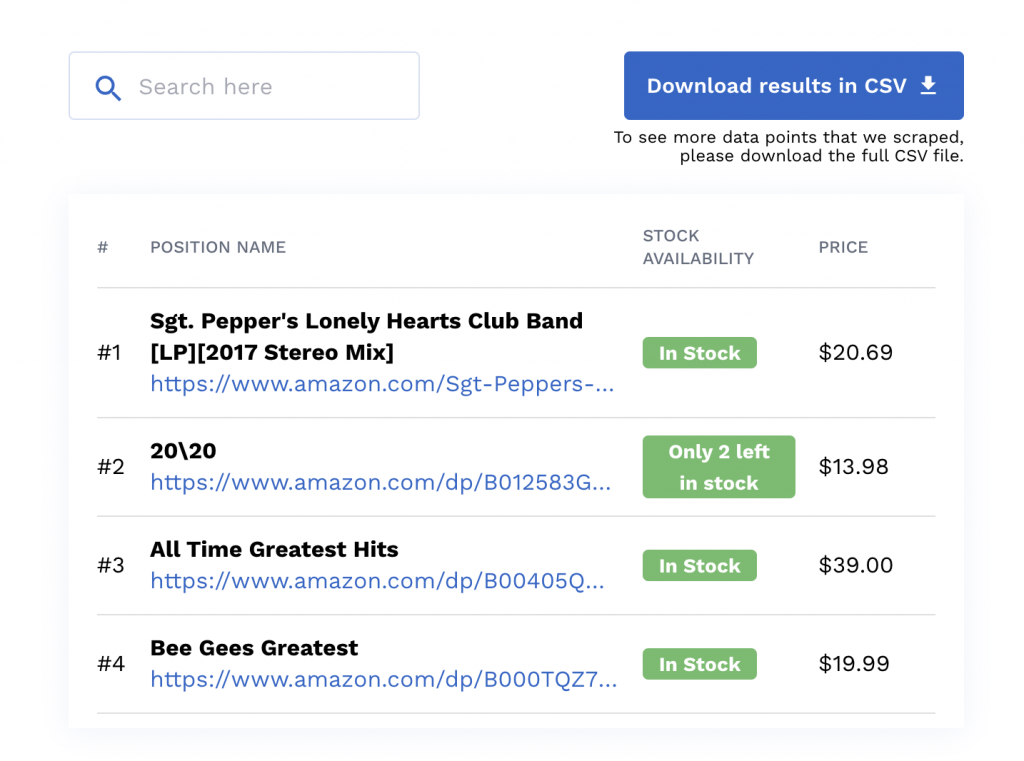

I used the “Amazon Product Page” public recipe, which allowed me to scrape the product price, url and ratings of vinyls on Amazon.

In less than 2 seconds, I got easy-to-read data for the 19 results on that page.

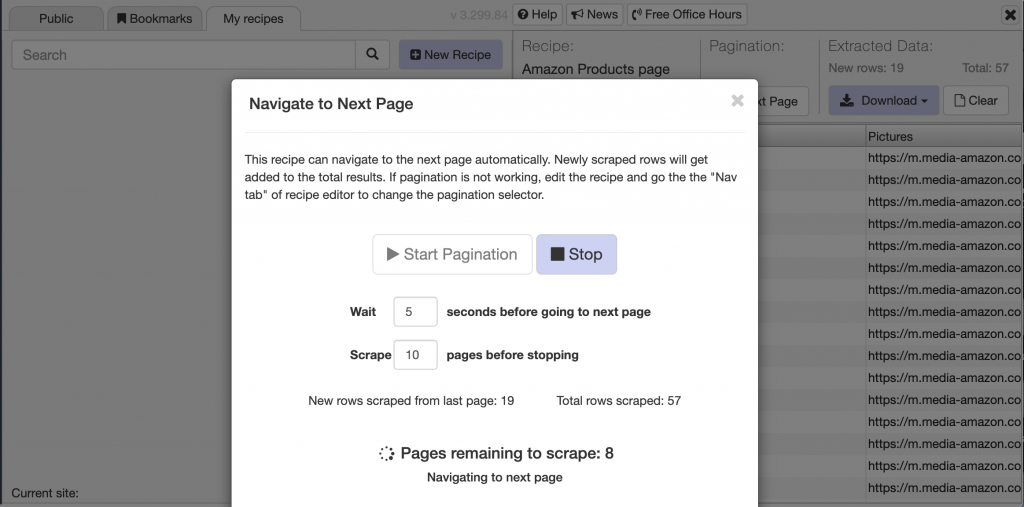

I wanted to try getting more results from more pages, so I clicked the Pagination tab on the scraper. The message below popped up, giving me the option of choosing how many seconds to wait between each page scrape, and how many results I wanted scraped from each page.

It didn’t work :/ As you can see from the screenshot below, these additional results were simply repeats from the first scrape. So, even though you can edit the scraping recipe, or make your own, there’s a bigger learning curve to the process than some beginners might be ready for. Even so, I’d say this is a good tool.

Javascript rendering Yep, it’s obvious that this tool can scrape dynamic pages. Just find the right template (or be #brave and make your own), and you’re all set.

Desktop software No desktop software for this one.

Conclusion Like I already said, this tools recipes make it pretty easy for beginners to simply enter the URL they want to scrape and get all the information they need. If you want more versatility and customization, you’ll have to make your own recipe, but from what I found, there are plenty public recipes to try first.

WebRobots.io

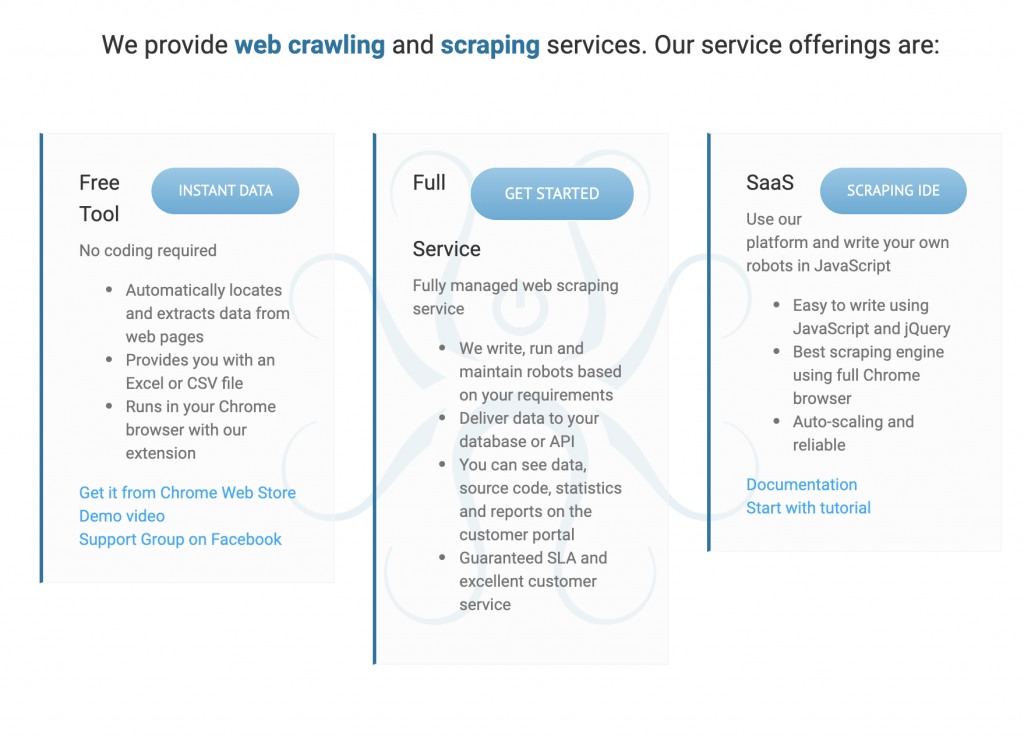

Rating: 8/10

Cost The free browser tool has a lot of useful features for beginners, including automatic extraction into an Excel or CSV file. If you want full service, you can contact the company with your needs, but no prices are listed on their website.

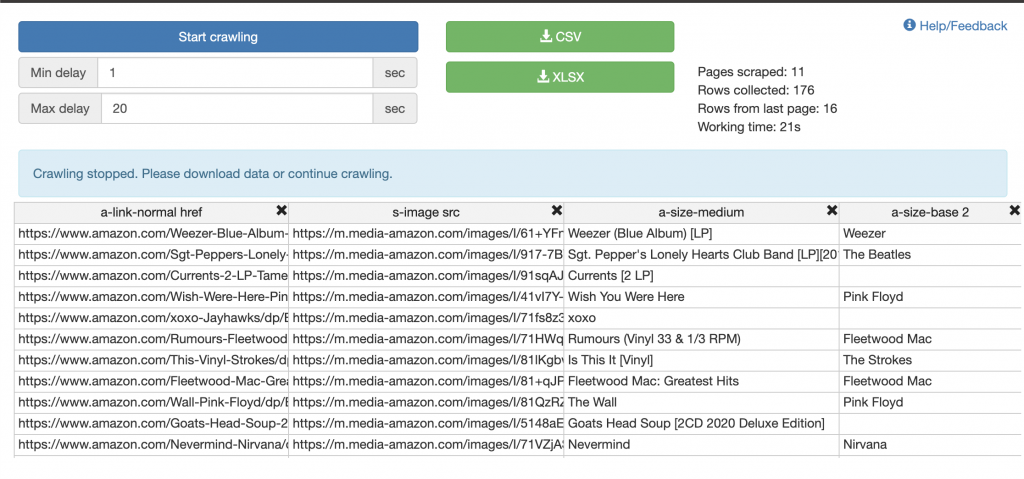

Ease of use This extension is advertised as an “instant data scraper,” and they’re not joking around. It was pretty easy to scrape the following pages. In 21 seconds, it scraped 11 pages.

Javascript rendering Yep, you can definitely scrape dynamic pages with this tool. It took slightly longer to load the data (meaning 5 seconds instead of 2), but it still worked very quickly and made it possible to scrape the next page results, too.

Desktop software Not a desktop tool.

Conclusion The speed of this tool is really great. Some scraping tools only allow you to scrape the first page URL you entered, but what if there are 20 pages of results? This tool makes it very easy to scrape as many as you want, in very little time. There are plenty of helpful tutorials and knowledge base articles, which I’ve already said is a very big plus. The only downfall to this tool (that I found, anyway), is the lack of customization capabilities. The tool can probably get you the data you’re looking for, but if you want to customize your results, you don’t get that option.

Import.io

Rating: 8/10

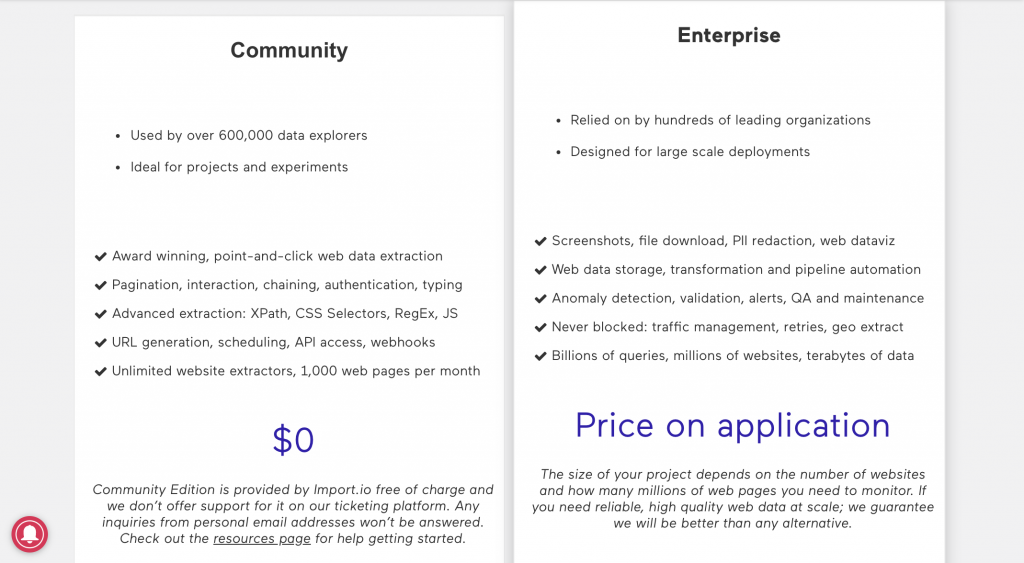

Cost The community cloud version of this tool is free, giving you access to 1,000 web page scrapes per month. You can see the other features in the screenshot below.

Ease of use For beginners, the “community” cloud scraping tool is great.

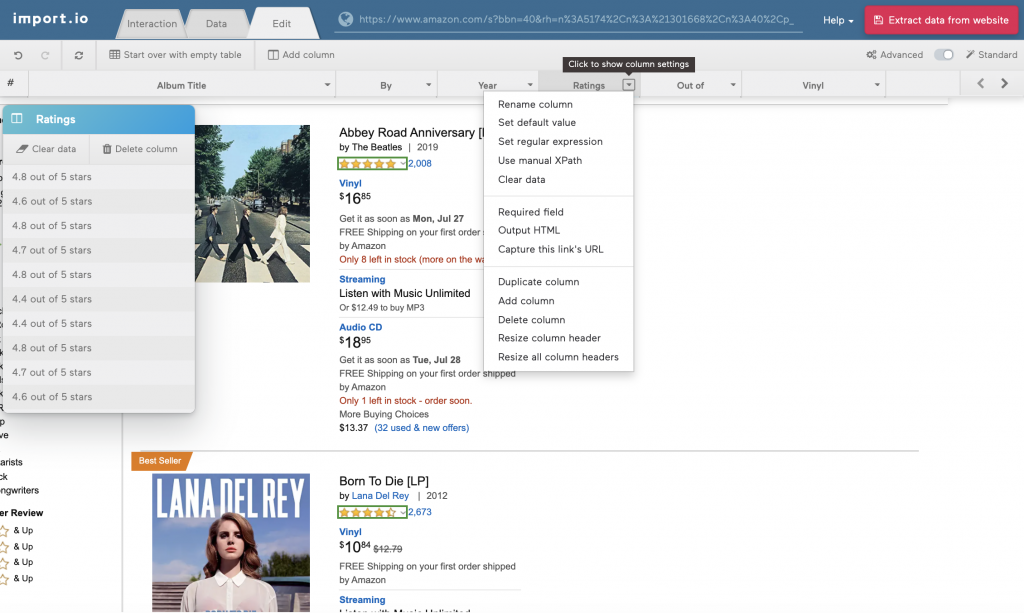

In this screenshot, you can see the columns across the top of the page, showing the data output I’m getting from this scrape. If you don’t want all of the data (say, the ratings, for example), then all you have to do is select “delete column” from the drop-down list. That data won’t be included in my results. You can follow the same process to add a column of data.

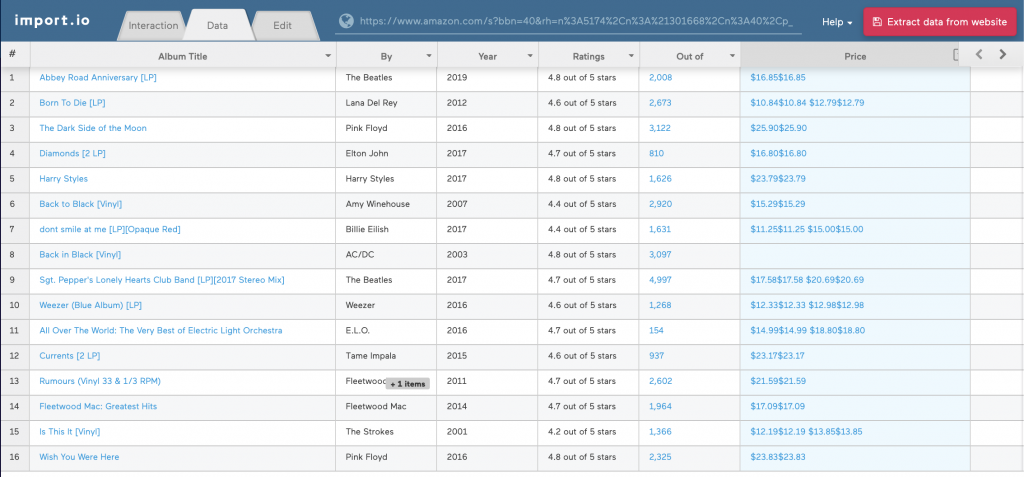

This is what the data looked like before I made my edits, but I wanted it to look a bit cleaner.

I entered the “edit” tab (shown in the top-left of this screenshot) and deselected the columns I didn’t want to show up in my data. I also renamed certain columns so they were more clear, and voila. This was my result.

Finally, I downloaded this data into a CSV file. With the community version, you can upload and share this data with the rest of the Import.io community, which is a great way to learn from other beginning and expert scrapers.

Javascript rendering While this tool allowed me to load Google Maps into the extractor URL bar and manually select the proper elements for scraping, the scraper did not run effectively.

Desktop software Not a desktop tool.

Conclusion This was definitely the easiest tool I’ve used that has also given me the most useful information (other than Scraping Robot, but you can read my review of that tool at the end of this article). Not only is it fast, but it also just makes sense. If you run into any issues, you can access interactive tutorials that help walk you through the process.

OctoParse

Rating: 5/10

Cost The free 14-day trial for Octoparse allows you to download the 8.1 beta desktop app. (However, make sure you set a reminder to cancel your subscription before the 14 days are up, or else you’ll be charged for that month.)

Ease of use This tool can take a bit of time to get used to, since you have to navigate pages on your own and figure out how to make proper selections with the point-and-click tool. But, if you want to just use a template, you can choose from several like the ones you see below.

I chose the “US URLs Amazon” template to scrape for Amazon vinyls, which includes output data like title, price, ASIN and URLs. However, instead of entering the URL you know you already want to search, you simply enter keywords that the scraper will collect data for.

In this case, I entered “rock vinyls” and “rock vinyl records.” You can choose how many pages to scrape, but only up to 5. (I chose 5, of course.) And, you can enter the zip code you want to search.

After about 5 minutes, the run said it completed successfully, but wait…no data to show for it. I thought using the templates would be easier than manually clicking through the info I wanted to scrape, but it appears that even Octoparse’s templates have a learning curve.

Javascript rendering Octoparse has the capabilities to scrape dynamic websites, but as I found with other tools, the point-and-click functions can be a little tricky to understand. After playing around with the tool for a while, I got the hang of it, but I’m not sure if I’d choose this app over some other tools in the article.

Desktop software Yep, this desktop app (all plans) offer automatic IP rotation, reducing your risk and chances of getting banned.

Conclusion I know there’s a lot of potential for this tool, but I just don’t have the time and patience to let that manifest itself. Since I don’t think I’m the only one who feels that way, I wouldn’t recommend this tool to most beginners.

Agenty

Rating: 7/10

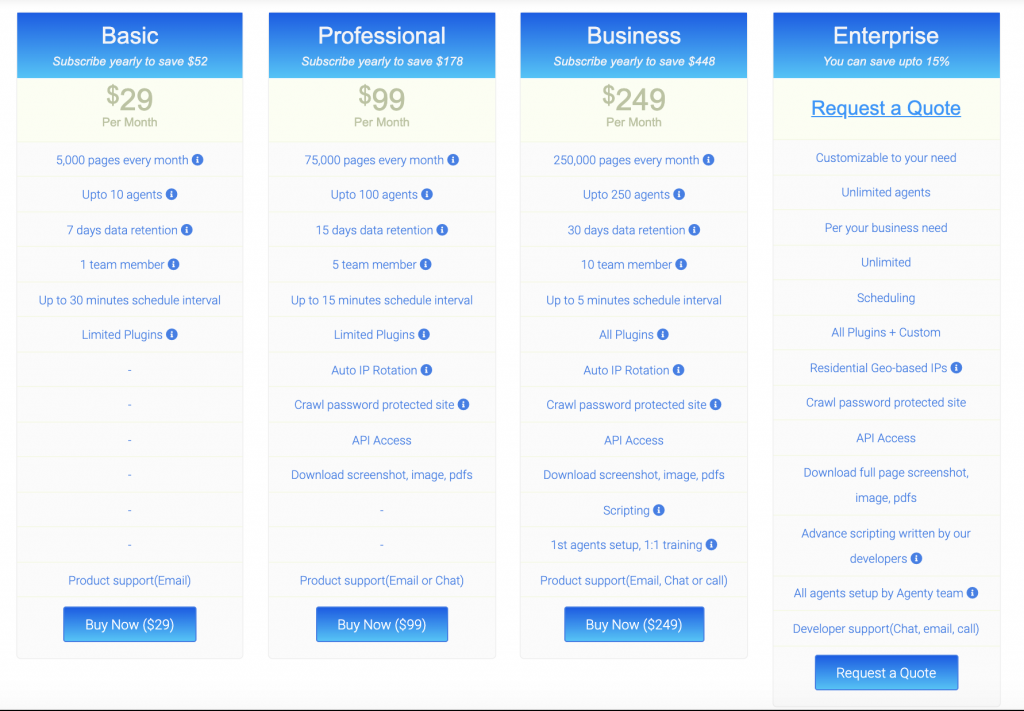

Cost With the 14-day free trial of this chrome extension tool, you get 100 free page scrapes. The next plan starts at $30 per month, giving you access to 5,000 page scrapes for one user.

Ease of use This tool was a bit tricker too get the hang of than others, but I found the video tutorials to be very helpful. If you want to complete more advanced actions, there’s going to be a bit of a learning curve.

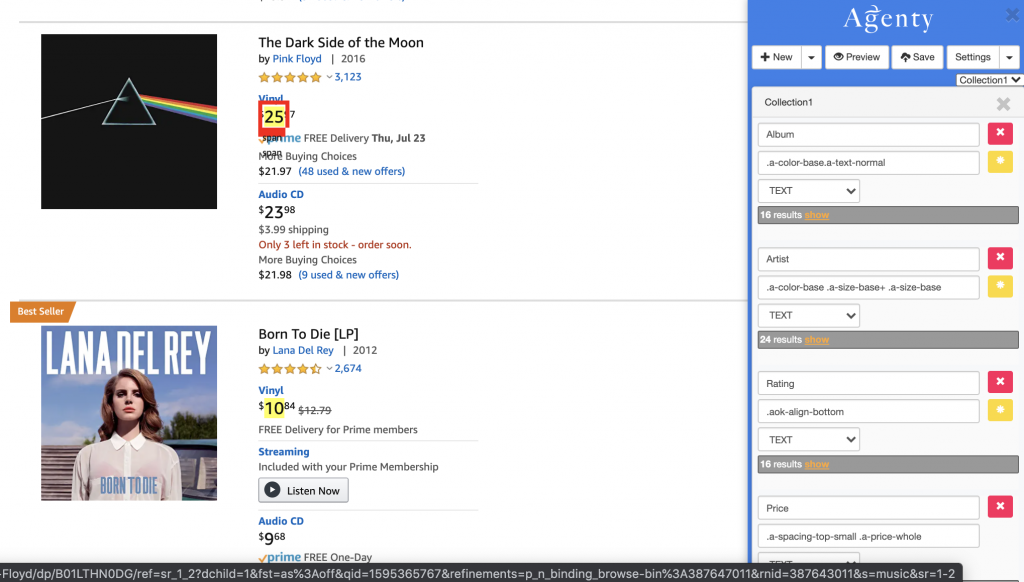

You can see the price values that I selected, and their corresponding scraping command on the bottom right side of this screenshot. Agenty makes it easy to select these values, but its automatic selection tool can be a bit tricky if you don’t select the content in the exact right spot. However, you can easily deselect the content you don’t want to be included in the scrape by simply clicking it again.

Here’s the data I received after running this scrape. I couldn’t figure out how to select the decimal values for each price, so I just had to settle for the dollar amount. (Amazon’s code separates these two values.)

However, Agenty seems very on top of their customer support. After running this first trial, I received an email from an Agenty support representative, who said he noticed that my scraping agent was incomplete. He took the initiative to fix my agent, and it now shows the complete pricing information.

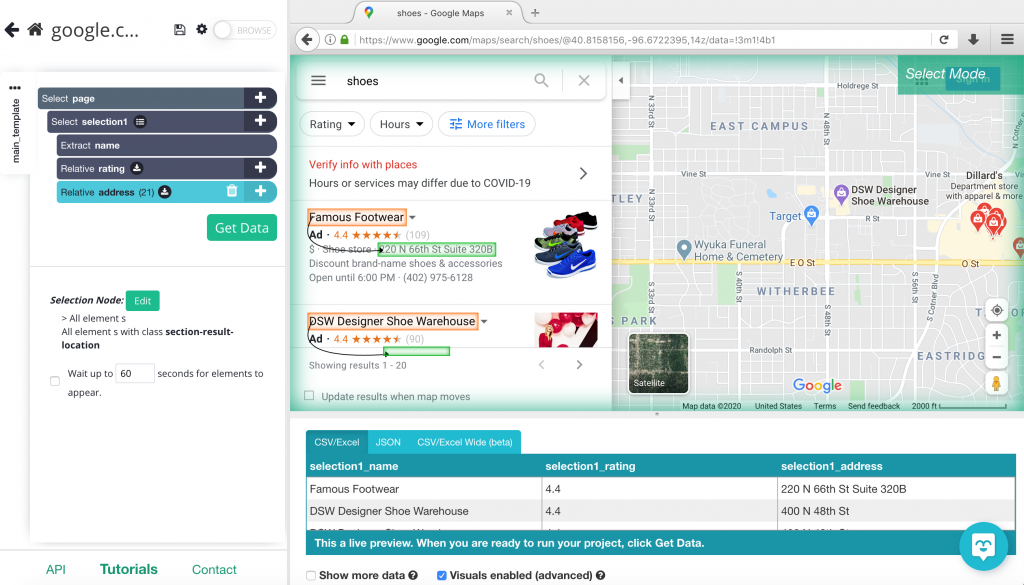

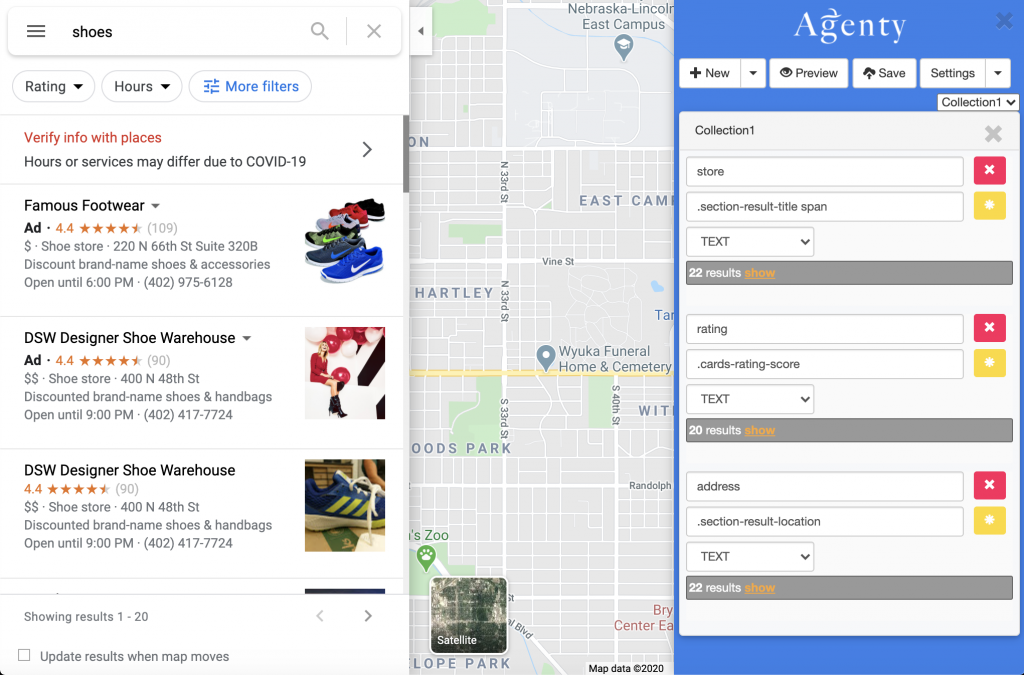

Javascript rendering Yep, this tool scrapes dynamic pages pretty easily. Here’s the screenshot of the selector tools, which should give me the store name, rating and address (based on my own point-and-click selections and naming.)

However, after waiting at least ten minutes for the data to load. I gave up. It doesn’t look like this tool can scrape a website like Google Maps, or at least, it can’t do so quickly.

Desktop software Not a desktop tool.

Conclusion This tool served its purpose, but it didn’t really stand out to me from the rest. I like its visual interface and easy point-and-click commands, but everything after that felt a little complicated to me. However, I was very impressed with the initiative of the customer support team, so it’s clear that you will find the help you need with this platform.

Scraping Robot

Rating: 9/10

All righty, I’ll be honest. Of course I’m biased toward Scraping Robot. But that’s because I really do believe in our product, and as a beginner myself, it proved to be the easiest, most helpful tool out there. But, just like I did for the other tools in this article, I’ll give my honest review, and you can decide for yourself if it fits your needs.

Cost Scraping Robot definitely gives the best deal of all these tools for beginners. With the free plan, you get 5,000 free scrapes per month. After that, you can use our pricing calculator to determine exactly what you’ll be paying for the number of scrapes you need.

Ease of use Like I said, Scraping Robot was definitely the easiest tool for me as a beginner. But unlike the other tools in this article, it’s not built as a point-and-scrape tool. All you have to do is select one of the pre-built modules to get automatic output data.

After making an account, you can create new projects with these modules. If you want to scrape pricing data with the Amazon module, you’ll have to enter each individual product URL.

Since this is a little different than the other scraping tools I reviewed in this article, it might not be the best option for all beginning scrapers (especially if you want a quick list of data.) However, if you already have a list of URLs, you can easily upload that list to receive the data you need.

Javascript rendering Yep, Scraping Robot can scrape dynamic web pages, but you have to use the right module for the job (like our Google Places scraper.)

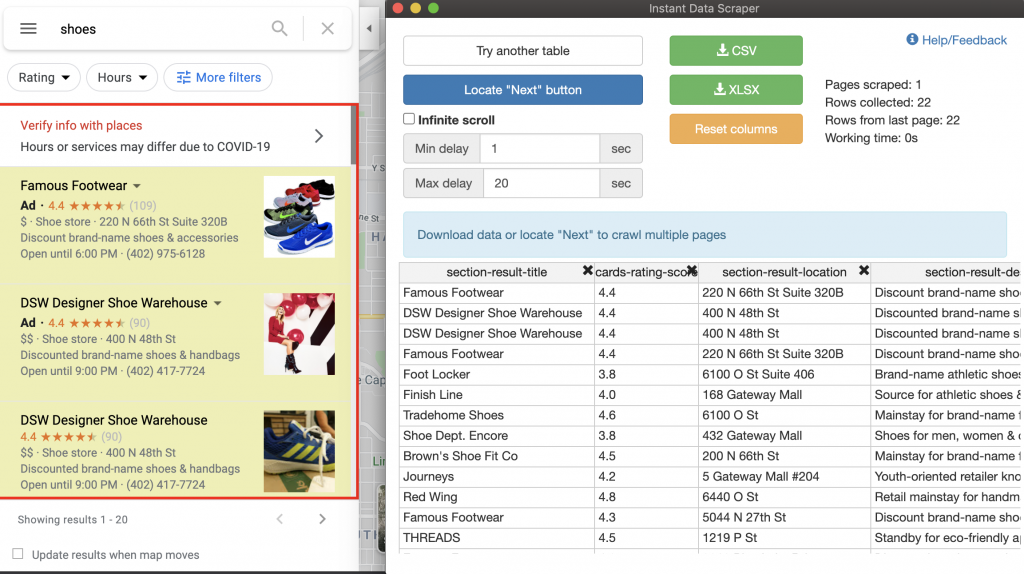

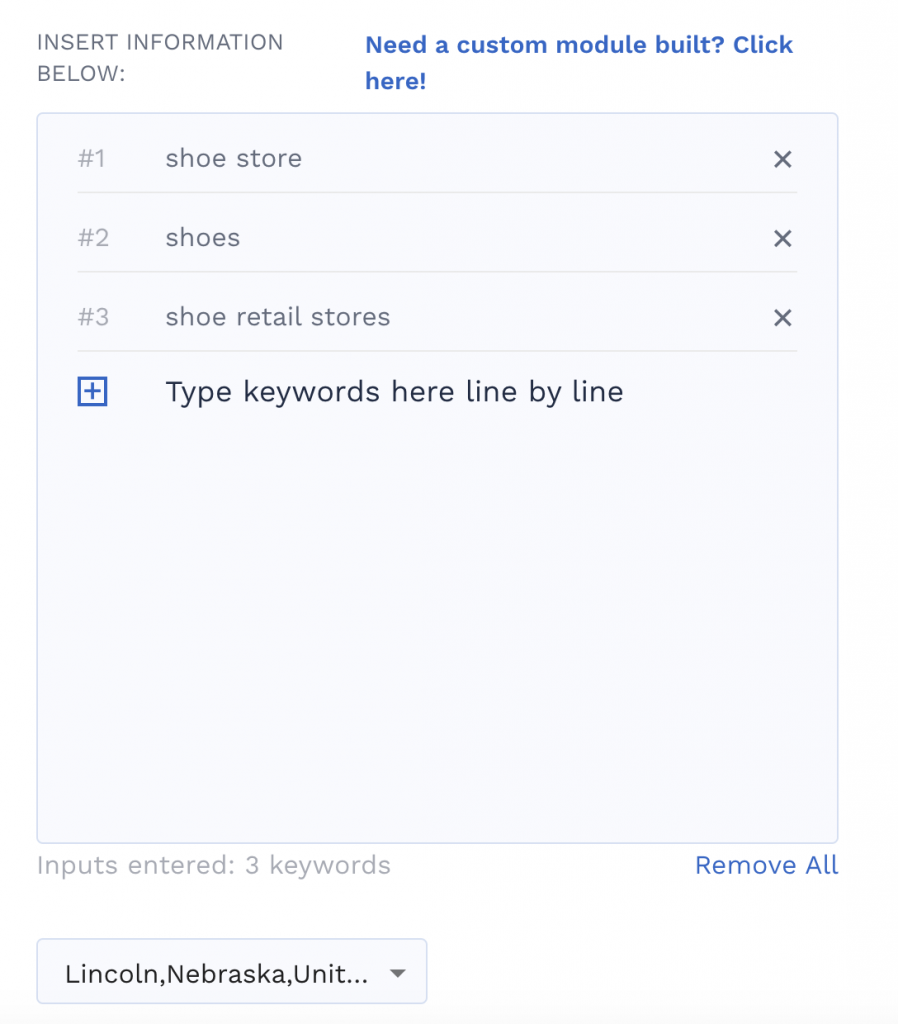

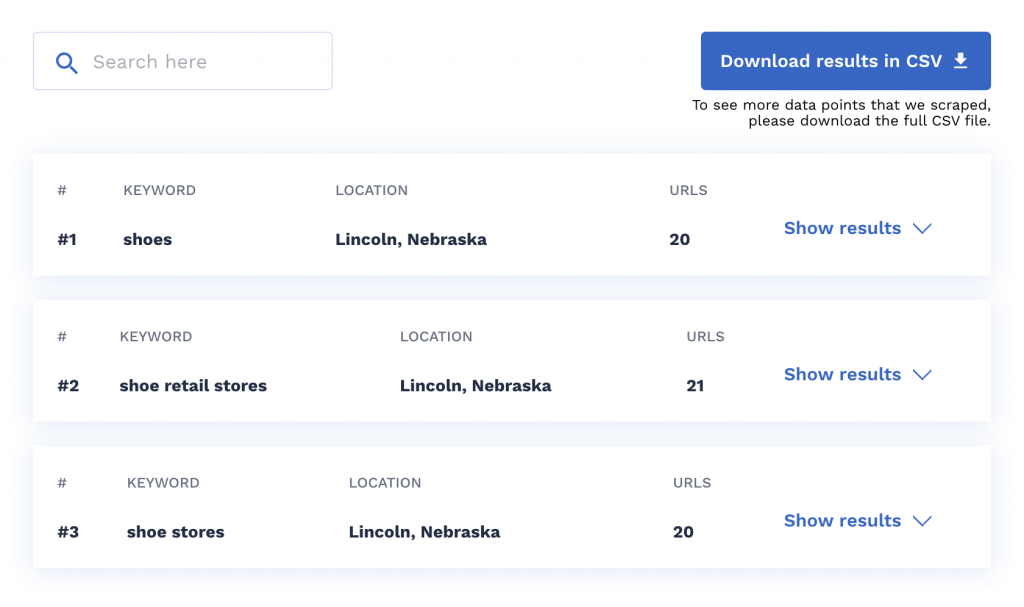

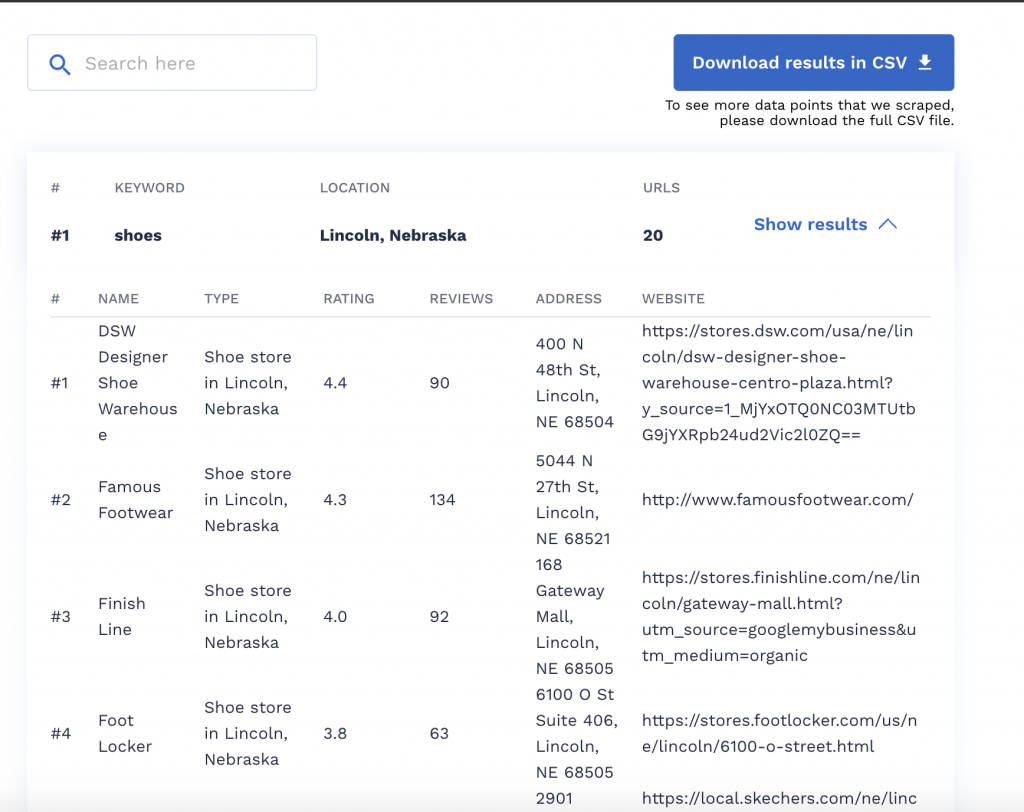

This scraper works a little differently than the other tools in this article, allowing you to enter a keyword and location right into the module (like “shoes”). Then, Scraping Robot will gather the list of locations and places containing that keyword from Google.

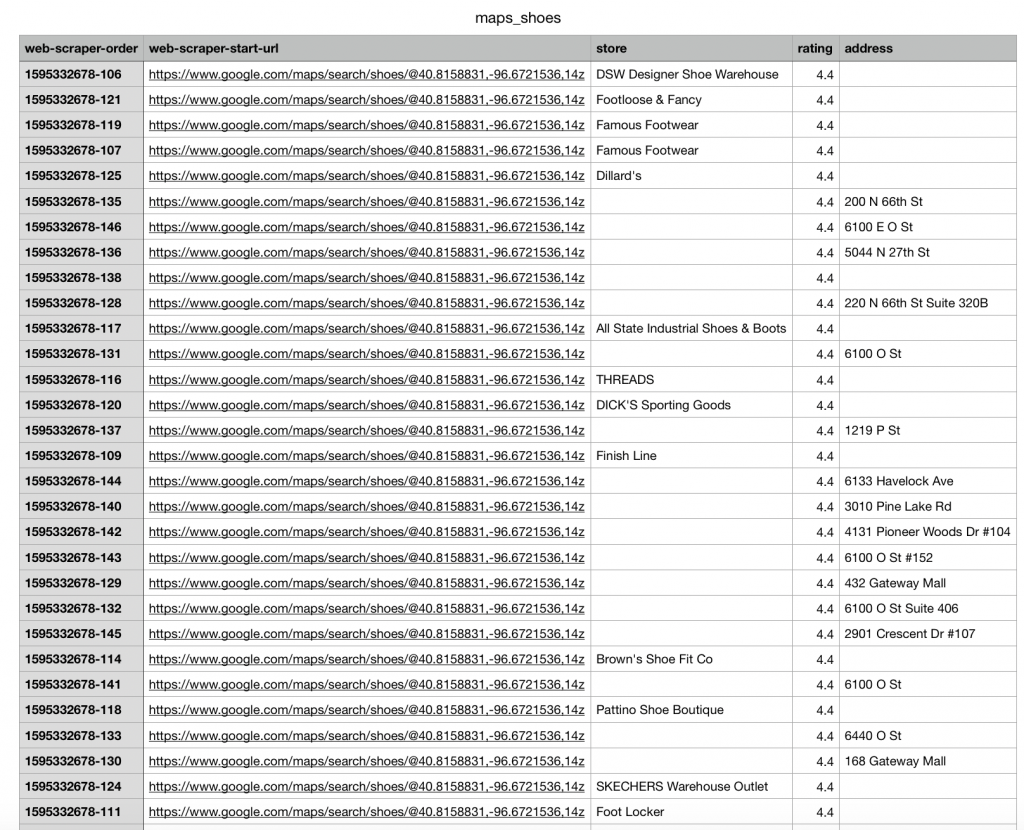

Here’s an example of some data I received from those scrapes:

I really think this is the most helpful way to run a scraping tool for beginners. Instead of having to navigate point-and-click commands, I just have to enter the keywords or URLs I want to search for, and the Scraping Robot module does the rest for me. This is definitely my preferred way to scrape.

Desktop software Scraping Robot is actually in the process of developing a desktop software app! If you’d like to become a beta customer to help us build the best product, visit our beta customer information page to learn more.

Conclusion Scraping Robot is a great tool for beginners who don’t want to navigate point-and-click interfaces. If you just want to enter your keywords and URLs, you’ll get tons useful information. If you want to have more control over customizing your data, however, you can either use our APIs with your own robot, or you can reach out to use with details about your unique needs.

Final Thoughts About the Best Web Scraping Tools

So, there’s my honest opinion about these popular scraping services that show up in nearly every search for “best scraping tools.” Yes, I know I probably missed some. So if you hear of a tool that you think should be reviewed and included in this list, let us know! We’ll add it. But the point of this article, like I’ve said so many times already, is to just give you a start.

Data is out there, just waiting to transform your personal and professional life. But there’s a freaking ton of it out there, so knowing what to do about it can feel kind of scary. So scary that we end up doing nothing at all. So, let’s break that cycle. Scraping Robot knows the value of data, and it’s our job to make that data accessible for everyone.

The information contained within this article, including information posted by official staff, guest-submitted material, message board postings, or other third-party material is presented solely for the purposes of education and furtherance of the knowledge of the reader. All trademarks used in this publication are hereby acknowledged as the property of their respective owners.

Some Biographical Info